AI ChatGPT – The Next Great Hype Cycle?

PUBLISHED

2023-01-30

Content

by Alison Porter - Portfolio Manager

Investors have quickly jumped into the hype and debate around ChatGPT. Launched at the end of November 2022, it reached over a million users in one week (it took Netflix 3.5 years) and Microsoft CEO Satya Nadella has called it “the biggest technological platform of the generation.” The interest on Google, Twitter, and investment blogs as a trending topic certainly qualifies it for entering a hype cycle phase!

Our team adopted artificial intelligence (AI) as one of our mega themes seven years ago. For decades, technology companies have focused on enhancing the interface between users and technology.

We view ChatGPT as another step on the path of AI’s integration into everything tech. From a user perspective, we continue to believe that AI integration is key to democratising technology usage over the long term. And that its development will be ‘evolutionary and not revolutionary’, similar to our view on the metaverse.

From an infrastructure perspective, we think ChatGPT is more meaningful, bringing us closer to a watershed inflection point from AI focused on perception (interpretation of sensory data such as images, sound, and video) to generative AI (creation of new content), which will require exponentially greater computing power.

What or who is ChatGPT?

ChatGPT is a chatbot released by AI research group OpenAI, an artificial intelligence research company founded in 2015 by Sam Altman and Elon Musk, amongst others. OpenAI was originally set up to ensure that AI was developed with a focus on safety and benefits to humans. It began as a non-profit organisation and evolved into what is known as a ‘capped profit’ company-hybrid of for-profit (OpenAI LP) and non-profit (OpenAI non-profit) so that it could scale up by raising additional capital and attract talent. The limited partnership currently has several hundred employees, with Microsoft the lead investor (circa $1bn initial funding in 2019, with a further $10bn confirmed recently). OpenAI has three primary offerings today: ChatGPT, art generator DALL·E 2, and automatic speech recognition model, Whisper.

ChatGPT is based on Generative Pre-training Transformer (GPT) – a large-scale natural language model (LLM). Users submit questions to ChatGPT, which is designed to respond with human-like (coherent/natural) answers. In simple terms, it’s a broad-based, highly sophisticated chatbot that offers answers that read like they are coming from a human. The living nature of the software is why, futuristically, ChatGPT is not referred to as a ‘what’ but as a ‘who’.

GPT-3 enables better understanding of context and is paving the way for generative AI as opposed to responses and analysis based on existing data. In the past, neural networks were trained on data labelled by humans. This was both time consuming, costly and limiting. Transformer models self-learn, require much less human curation but much larger datasets and computing power to crunch exponentially larger models. With GPT-4 potentially launching later in 2023, there will likely be further improvements in terms of user experience/interface and the path towards monetisation.

ChatGPT’s implications for the tech universe

Microsoft’s CEO recently confirmed that the company is looking to rapidly commercialise, with their cloud platform Azure OpenAI service now generally available, integration of OpenAI text-to-image generator (DALL·E 2) into the new Designer app and also, the intention to incorporate ChatGPT into Microsoft’s Bing search engine and other MS Office-based functions.

Where will this disruption be felt the most?

1. Disruption to search – the innovator’s dilemma

The first area where ChatGPT is thought to represent a disruption threat is Google Search, based on the notion that natural language models could increase users and then take a share of search queries, becoming a new entry point for people on the internet. ChatGPT responses can make Google Search’s responses seem basic as it has conversational abilities, filtering technology and the ability to ingest data.

In voice recognition, Siri, Amazon and Alexa have already provided other challenges to search as have TikTok and Instagram with video search. As the app store emerged there were concerns about the impact of direct-to-app on Google’s search business. Ultimately, the evolution of hot-app store and in-app search benefited Google because of its unparalleled ability to scrape information from all parts of the internet. While we recognise the emergence of disruptive technologies to search, the law of large numbers, cyclical and privacy-related threats could be more pressing for Alphabet in the near term.

We are not dismissive of the threat but do think it is an overly simplistic view. Google was the earliest and a strong proponent of AI and machine learning having first mentioned it some 20 years ago in its 2005 annual report. Alphabet’s most recent investor call discussed AI-powered search (and large language models) as being the most important of its four key investment initiatives, namely AI, YouTube, hardware and cloud. Alphabet has invested heavily in AI, with circa $177bn1 spent on research and development and capital expenditure between 2000 and 2022, with around half of its employees focused in some way on AI and machine learning.

Alphabet has several products already in operation but must consider how to develop and adapt these without cannibalising its existing and highly profitable search business (the innovator’s dilemma) and raising significant regulatory and moral concerns.

Beyond monetisation there are numerous considerations when developing these AI models:

- Trust and accuracy

LaMDA is a similar natural language model to ChatGPT and was famously called ‘sentient’ by one of its engineers. While strong conversationally, the complexity of human conversation and language means ChatGPT’s results are lacking in terms of accuracy, context and trust. So, the issue facing Google Search and Microsoft Bing is not the simulation of answers, but ensuring that ‘generative’ answers do not become ‘fabricated’ results without credible sources. Introducing it to Search will likely lead to more regulatory scrutiny of AI as it can bring some negative consequences. Google Search limits potentially harmful searches like how to hotwire a car, build a bomb or stalk online. ChatGPT currently has no restrictions on type of query nor filters on accuracy or truth, which can be dangerous given its ability to mimic human likeness.

- Scale and timeliness

Google has an estimated 4bn+ daily users, with billions of searches per day. Search is iterative in accuracy and users want the most accurate information. While able to deal with complex questions, ChatGPT lags in timeliness and hence accuracy of answers.

Google has seen a rapid rise in queries that are location-based or require time-bound answers, eg. ‘latest’ or ‘news now’. The advantage of Google Search, which Bing and other search engines have been unable to replicate, is not only the ability to index and serve results, but also crawling ability – downloading text, images, and videos via automated programmes. There is no central registry for web pages. Discovery and updating is key so that the latest information eg. store opening and closing times, news across the web, and data are essential to produce accurate and timely responses. The volume and consistency of consumer activity speaks to how utility is derived from Google, so driving significant differentiation and change will be challenging.

- Cost

There are fundamental differences in how Google indexes pages and how large language models ingest data. Indexing at Google’s scale would be cost prohibitive for ChatGPT; the current cost per query is estimated to be around $0.02 per query on average. The cost is highly dependent on the words generated per ChatGPT query and the size of the algorithm. ChatGPT’s cost per query is around seven times more than Google Search, which will make commercial monetisation more challenging without significant increases in spending on compute power.

2. Impact on computing power, the cloud, and semiconductors

While GPT-2 (late 2019) was launched with only 1.5 billion parameters, ChatGPT-3 was trained with 175bn parameters. GPT-4 is expected to take this into the trillions. Microsoft estimates that the computing requirements for AI training doubles every 3.5 months!. As a result, graphics processing unit (GPU) designer and manufacturer nVIDIA designed its latest Hopper GPU architecture with a dedicated transformer software engine, enabling 9x the AI training performance, or 3x the performance at the same power, which is an increasingly important metric given climate change targets.

Mega cap tech companies have been declaring their plans for AI investment in recent years. Particularly notable is Meta’s more than $35bn guide for capital expenditure in 2023, largely driven by further investment in AI/machine learning and higher-end GPUs to allow for more analytics and computing power for its algorithms.

Driven by a weaker economic backdrop and outlook for revenues over the next three months we have witnessed a birth of cost consciousness within large cap tech companies. Despite headcount reductions for some of these companies, we believe spending will continue to be directed to AI/ML applications that are viewed as having higher monetisation opportunities, with ChatGPT adding fuel to an AI arms race amongst hyperscalers.

The increasing use of AI has positive implications for the semiconductor giants and will accelerate the shift to cloud computing as the intensity of compute power needed will require a pooling of resources and barriers to entry in terms of capital expenditure.

3. Threat of competition in software

A recent survey by networking app Fishbowl2 showed that drafting emails and generating bits of code are typical use cases for users of ChatGPT and other AI tools. It also revealed a broad-based pick up in usage across a variety of industries, with over 30% of respondents in marketing and advertising, tech and consulting having used these tools at work.

ChatGPT’s underlying GPT-3 technology could be deeply disruptive to several areas:

- Coding and software development

The success of Microsoft’s GitHub Copilot and DeepMind’s AlphaCode shows that this technology can help to both automate coding and improve the quality of that code. Coding can be very expensive and there is an opportunity to extend what started as low-code/no-code platforms with machine learning. On New Year’s Day this year, Andrej Karphathy, Tesla creator of Autopilot tweeted that 80% of the code he writes today is done using GitHub Copilot.

- Detection of data security and vulnerability

OpenAI has shown that it can detect some data security vulnerabilities in code samples.

- Education, essay-writing capabilities, maths questions and tutoring availability

ChatGPT has caused concern in the academic industry given its ability to create books and essays in a short period of time, and recently even passed a Wharton MBA exam. Companies such as Chegg have built competitive moats around being able to answer complex student questions and while ChatGPT still lags in being able to meet the same level of response, generative AI is getting better – and quickly.

- Medicine and vaccine development

Pattern matching against a defined knowledge base is an increasing use case and opportunity. However, many scientists are also concerned that AI is able to write convincing fake research that may be very difficult for researchers to differentiate.

- Customer service and sales functions

There is an opportunity to extend virtual agent models. ChatGPT is used by businesses to enable employees to access key information. For example, what is Janus Henderson’s latest view on 10-year Treasuries? What is Janus Henderson’s maternity leave policy? While answers may face many of the same accuracy and timeliness issues of search, longer term it may create a competitive headwind for companies such as Salesforce and HubSpot.

- Content creation

An unappreciated implication of recent AI developments is the impact on content creators and software developers. Alphabet’s DeepMind subsidiary announced the release of Dramatron, a scriptwriting software enabling writers to co-create theatre and film scripts (complete with title, characters, location descriptions, and dialogue). Meanwhile, OpenAI’s DALL·E 2 can create realistic images and art from a description in natural language. For example, in just two minutes, it was able to generate an image of what the Mona Lisa looks like with a body.

- Simulation

Podcast.ai, a series of completely AI-generated podcasts, released a 20-minute interview between Joe Rogan and the late Steve Jobs touching on faith, tech companies, and drugs. AI can effectively provide tools for creators, giving them the ability to create without deep coding knowledge. As with search, the lines between reality and simulation is becoming blurred and trust in creators and moderation will become more important. This has relevance for how we see new content created in the digital world of the metaverse.

There is a significant opportunity for hyperscalers like Amazon Web Services, Microsoft Azure, Google Cloud Platform and Meta to accelerate the roll out of AI processes for their own applications, and over the long term this will create competitive challenges for application software providers. We see this as a key reason for tech giants like Microsoft and Amazon investing in OpenAI, driving its potential valuation up to $29bn.3

Evolution, not revolution

The coming of age of artificial intelligence is moving closer, but similar to our view on the metaverse this is an evolution rather than a revolution that has been long underway. There continues to be a powerful convergence of key technology themes – for example the interplay between next generation infrastructure enabling greater computing power, which is facilitating AI and metaverse developments, which in turn require more computing power.

Hence while we continue to be excited by the opportunities that AI/ChatGPT provide, the many beneficiaries of the metaverse and broader shift to AI, we also recognise the cyclical pressures and regulatory hurdles to overcome until we see widescale adoption.

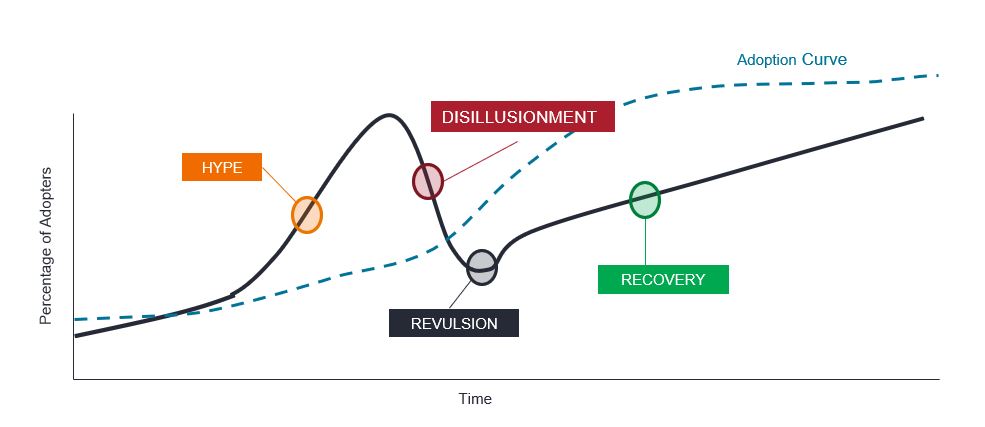

The hype cycle

Note: for illustrative purposes only.

As experienced tech investors we caution on being caught in niche thematics and to consider not only the long-term opportunity, but also existing competitive moats, the pace of the tech adoption curve by navigating the hype cycle and exerting reasonable valuation discipline.

ENDNOTES & DEFINITIONS

1 JP Morgan North America Research: Internet, as at 19 January 2023, figures for 2020-22E.

2 Fishbowl, Jan 17 2023: ChatGPT Sees Strong Early Adoption In The Workplace.

3 The Wall Street Journal, 5 January 2023.

Hyperscalers: companies that provide infrastructure for cloud, networking, and internet services at scale. Examples include Google, Microsoft, Facebook, Alibaba, and Amazon Web Services (AWS).

Tech democratisation: the process by which technology rapidly becomes more accessible to more people. Drivers include new technologies and improved user experiences, increasing participation in the development of products, more affordable user-friendly products as a result of industry innovation and user demand.

Innovator’s dilemma: a theory that describes companies whose successes and capabilities can actually become obstacles when faced with changing markets and technologies. Large companies tend to choose to overlook disruptive technologies until they become more attractive profit-wise. Disruptive technologies, however, eventually surpass sustaining technologies in satisfying market demand with lower costs. When this happens, large companies who did not invest in the disruptive technology sooner are left behind.

Navigating the hype cycle: the “hype cycle” represents the different stages in the development of a technology, from conception to widespread adoption, which includes investor sentiment towards that technology and related stocks during the cycle.